Languages

Modeling a digital gate with unequal rising and falling delays

The 'buf_xbit' and 'inv_xbit' primitives model digital gates with xbit-type signals. The 'delay' parameter can model their delays, but they apply to both the rising and falling transitions equally. How can I model a digital gate with unequal rising and falling delays?

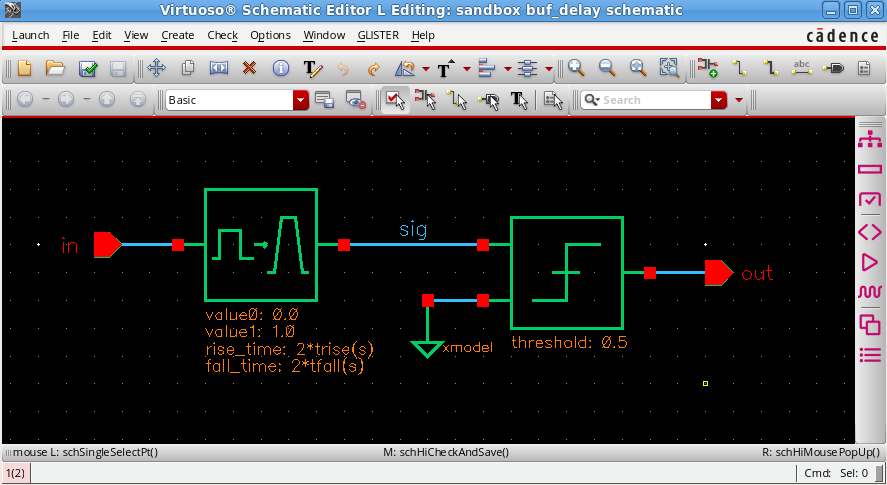

Here is a simple trick to model a digital gate with unequal rising and falling delays, using the 'transition' and 'slice' primitives. The screenshot below illustrates the idea. First, the 'transition' primitive converts the xbit-type input 'in' to an xreal-type signal 'sig' switching between 0.0 and 1.0 with non-zero rise and fall times. Second, the 'slice' primitive converts the signal 'sig' back to an xbit-type output 'out', using the threshold level of 0.5.

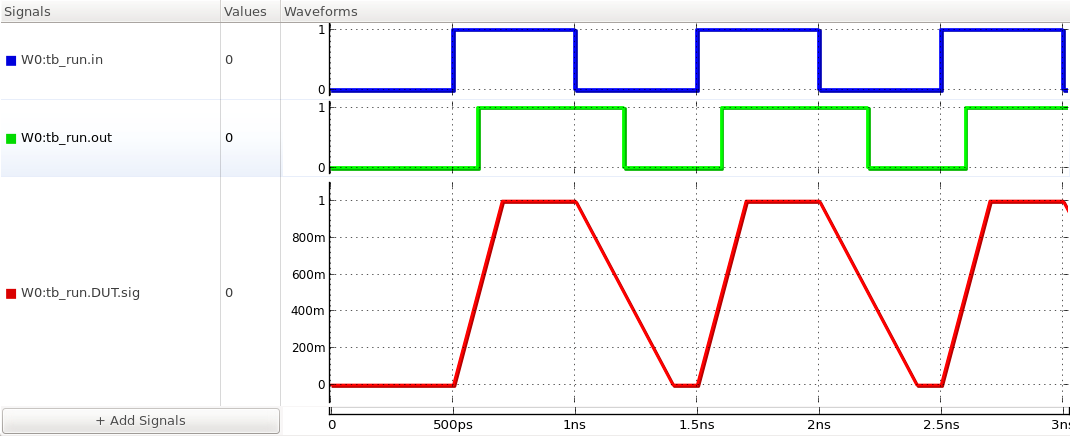

By setting the parameters 'rise_time' and 'fall_time' of the 'transition' primitive with twice the desired rising and falling delays, respectively, you can model a digital gate with unequal rising and falling delays. The waveforms shown below illustrate the operation when the rising delay ('trise') is set at 100ps and falling delay ('tfall') is set at 200ps.

Please login or Register to submit your answer